권호기사보기

| 기사명 | 저자명 | 페이지 | 원문 | 기사목차 |

|---|

결과 내 검색

동의어 포함

Title Page

Abstract

Contents

I. Introduction 9

1.1. Contribution 11

1.2. Organization 11

II. Background 13

2.1. Deep Neural Network Training 13

2.2. GPU Sharing Use Cases 13

2.3. Spatial GPU Sharing 14

III. Challenges for Memory Sharing 15

3.1. Memory Bloating 15

3.2. Workload Variability 16

3.3. Asynchrony with GPU Processing 16

IV. Design Overview 18

V. Scheduling Algorithm 21

5.1. Problem Definition 21

5.2. Time Shift Model 21

5.3. Memory Sharing Algorithm 22

VI. Memory Management with Concurrency 25

6.1. Tracking Memory Usage in GPU 25

6.2. Tensor Classification 26

6.3. Managing Memory Regions 26

VII. Evaluation 28

7.1. Training Same Models 28

7.2. Training Non-identical Models 30

7.3. Dynamic Memory Budget Change 31

7.4. Design Validation 32

VIII. Discussion 34

IX. Related Work 35

X. Concluding Remarks 36

References 37

Figure 1. Cumulative distribution of NASNet tensor lifespan. 15

Figure 2. Memory usage patterns for different DNN models over time. 15

Figure 3. GPU memory usage from CPU view (ResNet-50). 17

Figure 4. System architecture in Zico. 18

Figure 5. Throughput in training the same models.... 28

Figure 6. Aggregated memory usage for training the same models.... 29

Figure 7. Memory usage over time for training the same models.... 29

Figure 8. Throughput in training the distinct models concurrently.... 30

Figure 9. Memory usage over time for training NASNet and ResNet-110 concurrently. 30

Figure 10. Memory usage patterns on dynamic memory budget changes. 31

*표시는 필수 입력사항입니다.

| 전화번호 |

|---|

| 기사명 | 저자명 | 페이지 | 원문 | 기사목차 |

|---|

| 번호 | 발행일자 | 권호명 | 제본정보 | 자료실 | 원문 | 신청 페이지 |

|---|

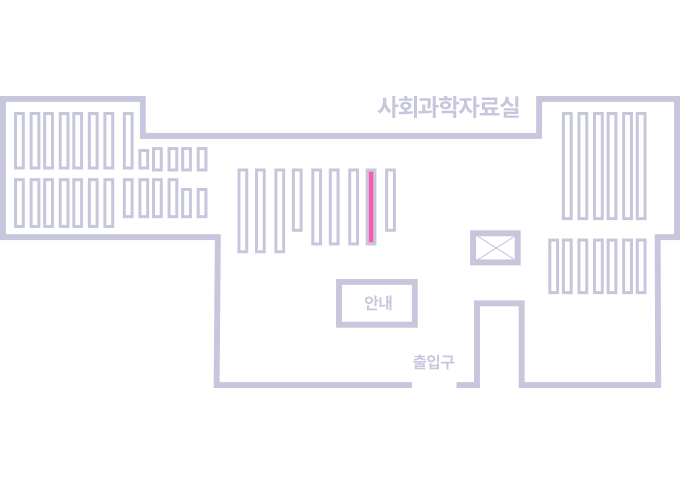

도서위치안내: / 서가번호:

우편복사 목록담기를 완료하였습니다.

*표시는 필수 입력사항입니다.

저장 되었습니다.